Epsilon Theory contributor Neville Crawley is back with an interview of Adam Julian Goldstein, discussing Adam’s fascinating new work on anxiety. If, like me, you have the entrepreneurial bug (and it is a bug, not a feature), this is a must read!

I’m very pleased to be interviewing Adam Julian Goldstein for Epsilon Theory: Adam has led the entrepreneurial dream of founding a company from his dorm room at MIT; to founding a second company, Hipmunk, that would become one of the biggest and well known brands in the travel industry and be acquired by SAP; to now to investing in other entrepreneurs.

Despite these successes, Adam has – like all of us – also suffered from anxiety and the negative performance effects that it can produce. Adam has recently been reflecting on his journey and his and others’ experiences of anxiety as entrepreneurs and risk takers to consider “Is there such a thing as the anxiety algorithm, and if so how does it work, and what might we do to optimize it?”

Could you first set out your high-level Anxiety Algorithm thesis and what led you to this concept?

As we grew at Hipmunk, I thought I should feel great because things were going our way. But I was actually more anxious. As I noticed this among other founders, I started wondering, “why are we designed this way?”

So I approached the problem like an engineer: if I were designing a system to imagine different possibilities for the future, what algorithm would I use to generate these possibilities? Which of these futures would I make it worry about? And how would I have it revise its beliefs over time?

I theorized that there would be tractable answers to these questions: simple information-processing algorithms that would provide a real survival advantage. I wondered what behavior would emerge as a result of these simple algorithms, and whether that behavior would align with my own experience and that of other people I’ve worked with. It turned out to explain a lot.

I find your ‘expanding search space’ model for anxiety highly compelling, and as an explanation of why founders are particularly prone to anxiety. Could you explain this?

It’s impossible to be certain what the future holds, so the real question is, how can you make an algorithm as good at guessing as possible? You could try hard-coding possible futures, but that’s extremely fragile if the world turns out differently than expected.

Our immune system faces a similar challenge, because viruses and bacteria are mutating far more quickly than humans are evolving. So the immune system has a clever approach: “imagining” future threats by shuffling up little snippets of possible threats at random, over and over, until it’s imagined the shape of most viruses and bacteria that could ever exist.

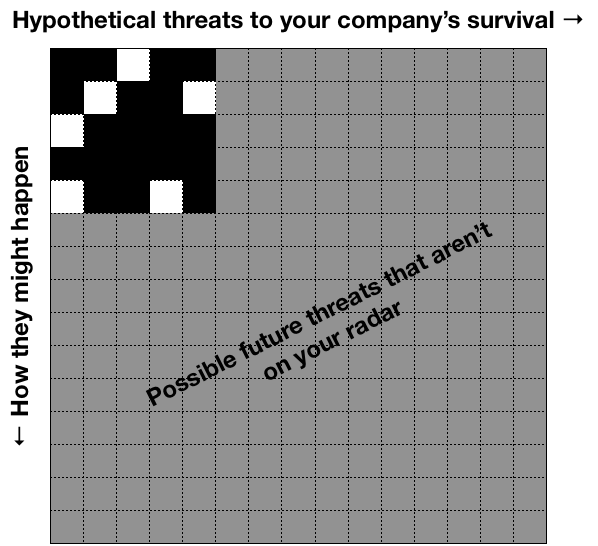

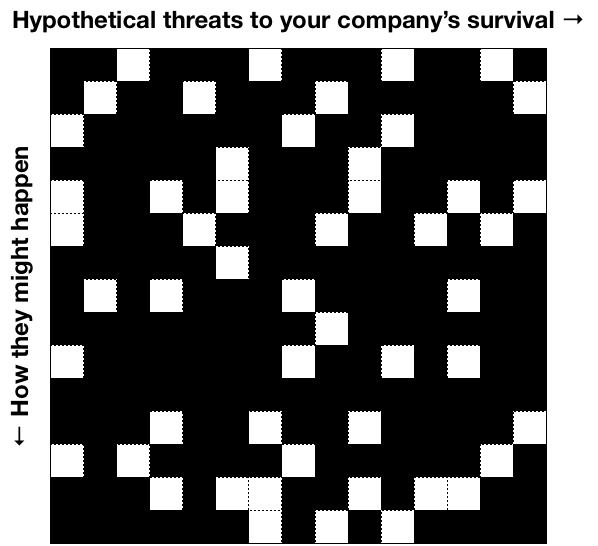

The first anxiety algorithm takes some little snippets of possible futures and shuffles them up at random to imagine what might happen. When the world is changing (e.g. because your company now has investors, customers, employees, etc.), the number of snippets increases, and therefore so does the space of possible futures.

Pre-launch (left), the number of known failure modes is small. Post-traction (right), the number of known failure modes is huge.

This explains why for me, traction resulted in more anxiety. Even as success got nearer, the space of possible ways to fail expanded.

What has been the most high anxiety moment of your entrepreneurial journey? Could you walk us through it and how you reflect on it now?

I was in the middle of fundraising for our Series D round, and Yahoo called us up and said they were ending our partnership because they were shutting down their travel site. We’d worked for years to put this partnership together and it generated a significant amount of our revenue—then it just disappeared. All my fears surfaced at once.

Our fundraise was, as I’d feared, a failure. My investors were, as I’d feared, unhappy. The employees I fired were, indeed, sad to be leaving, and I was sad for them.

However, I also learned that setbacks don’t need to be deadly as long as you don’t run out of money. Because once it became clear we had to restructure the company or else go out of business, we restructured everything I’d been afraid to before—cutting product lines, marketing channels, and growth plans. We got on the path to profitability and, less than a year later, we sold to SAP. So my biggest takeaway was that it was anxiety itself that kept me from making hard decisions; once I could no longer make excuses for inaction, I made the decisions and things got better.

But so many managers never have a forcing function like this, where inaction directly leads to failure. So instead, they listen to their anxiety when it tells them that it’s too risky to pivot, cut their burn rate, or reorganize their team—and, to use a baseball analogy, they strike out looking.

Let’s talk about Optimal Paranoia and why we are systematically over-concerned.

Let me first define what I mean by over-concerned: responding to something as if it’s a threat even when you know it’s probably not.

We all do this constantly. You refill your car when it has ¼ of a tank of gas—instead of waiting until the warning light comes on—so you don’t risk the tiny chance of running out of gas before you encounter another station.

My claim is that even though we’re systematically over-concerned in terms of raw risk likelihood, we’re somewhat “appropriately concerned” as a matter of survival. Running out of gas sucks, but even though it probably won’t happen, it’s not worth cutting it close.

The second anxiety algorithm shows what happens when this tendency gets applied to imagined futures. Even if we’re pretty sure a fear won’t come true, we err on the side of treating it as more likely than it is.

Moreover, we update our guess of how dangerous uncertain things are based on new experiences. When something good happens we tend not to dwell on it, but when something bad happens we tend to fear the worst. The third anxiety algorithm shows how this asymmetry results in higher survival odds—but also a great deal of suffering.

You model suggests we are more likely to die from over reacting than under reacting. This is certainly being hotly debated right now with various COVID response reactions. What are your thoughts on leaders’ COVID responses and over / under reactions?

When we systematically react as though threats are more likely than they actually are, we do keep ourselves safer. This is important as a starting point, because it’s tempting to say, “if we tend to overreact, we should just be more rational,” but that gets you back to running out of gas on the side of the road.

But it’s also true that when we systematically overreact (under certain assumptions), the deaths that do happen will more often arise from overreacting than underreacting.

We see this in COVID-19. It’s people’s immune overreactions that appear to be the leading cause of death from the virus. That might make you wonder why we have such aggressive immune systems, but if we didn’t, we’d die at much higher rates from other pathogens.

There’s a tendency to see that few US hospitals have been overwhelmed by COVID-19 patients yet and say, “see, we overreacted by implementing lockdowns.” Of course, if we hadn’t put the lockdowns in place, there would have been many more people who got sick and died. And yet, it’s conceivable that over the coming years more people will die from second- and third-order effects of the lockdowns (e.g. people cancelling non-essential doctors’ visits that could have caught tumors early, job losses that put people under extreme stress and raise their risk of heart attacks, etc.) than from the virus itself.

What makes COVID-19 unique compared to most threats is that it tends to spread exponentially. So I think this is a rare instance where being extremely paranoid, individually and collectively, was and is appropriate.

You also have the concept of The Attention Portfolio. It seems like we’ve had an invisible societal shift over the past decade to an Attention Portfolio that is more weighted to ‘Others’ due to increased information availability, social media etc. Could you walk us through The Attention Portfolio and any thoughts you might have on this invisible shift.

The Attention Portfolio posits that we allocate our attention between three things: direct experience, imagination, and what other people say. Each of these has risks and rewards. For example, listening to other people can keep us from making naive mistakes (e.g. eating a poisonous mushroom), but other people can also be self-serving, which can hurt us (e.g. a CEO who lies about his company’s prospects so he can unload stock at a high price before it crashes).

Because the risks and rewards of each source of knowledge are different and have low correlation, my fourth anxiety algorithm proposes that we are designed like a sensible investor: to allocate our attention in a diversified portfolio of all three kinds of attention and rebalance the portfolio over time. For example, when we perceive the world as having become more dangerous, we spend less time experiencing the world directly (risky) and spend more time seeking information from other people (safer).

Critically, the more we rely on other people to understand the world, the more susceptible we are to being shaped by their agendas—an emergent outcome which has dramatic society-wide consequences.

As you have transitioned from Founder/CEO to investing your own money as proprietary bets, have you found your personal anxiety to be more, less, different as an investor than an operating company entrepreneur?

My anxiety is much, much less now. When I ran a company, there were constantly new catalysts I could imagine for the company failing—partners, employees, investors, market valuations, competition, consumer behavior, marketing channels, etc. I felt like it was my responsibility to get ahead of each of those or else break trust with the people at the company who relied on me.

These days as an investor, I know exactly what the failure mode is for each investment: it goes down. But I’m single and live cheaply, and if I lose everything, I won’t be letting anyone else down. More than making money, I enjoy learning esoteric investments and meeting new companies solving interesting problems. I imagine I’ll be more risk-averse if and when I have kids.

Given that we know we need a certain amount of anxiety, and that the amount is dynamic based on the environment, what actions would you recommend to stay on the efficient frontier?

Despite all the ills of our modern world, it’s empirically a safer time to be a human than at any time in recorded history. This suggests that today’s anxiety is on average higher than it should be even as a matter of survival. (I suspect this is especially true among the readership of ET.) I talk about some techniques for reducing anxiety at the end of each essay.

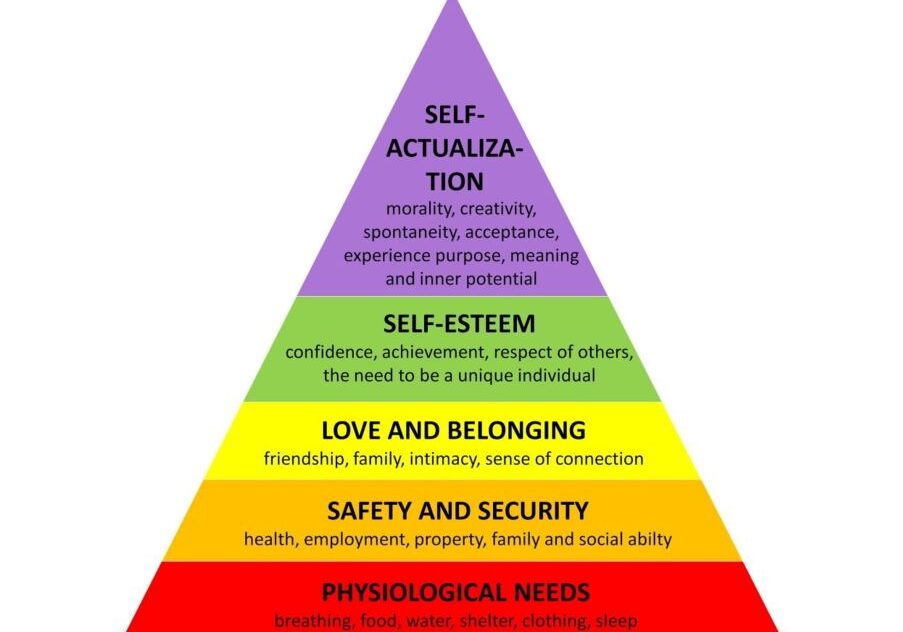

More broadly, just because our algorithms are designed to find some kind of efficient survival frontier, that doesn’t mean we should blindly go along with it. There are lots of great things besides survival higher on Maslow’s hierarchy, and anxiety works against those.

For example, when we look at older doctors helping COVID-19 patients, we think of them as courageous, aspirational figures, even though their choice entails an increased risk of contracting the virus and dying.

So to answer your question, I’d suggest that the best approach is to try to reduce anxiety far beyond what feels familiar, and use the mental cycles that open up to pursue something that feels meaningful and significant.

Your thesis takes inputs from multiple disciplines as well as, it seems, an underlying point of view which is something like Yuval Harari’s “everything is an algorithm.” Are there particular thinkers or researchers that have inspired your perspective?

Definitely. In terms of research, my top 5 inspirations were:

- Susumu Tonegawa for his insight on how the immune system anticipates threats through random recombination

- Claude Shannon for formalizing the idea of “surprise” in information theory

- Rudolf Kálmán for his insights on how to extract signals from noisy systems

- Harry Markowitz for the ideas of Modern Portfolio Theory

- Richard Dawkins for recognizing that what’s best for our genes isn’t always what’s best for us

More recently, I credit Brian Christian’s Algorithms to Live By for showing how we can view our own behavior through the lens of what we would program machines to do. And I credit ET for it’s analysis on self-reinforcing Narrative machines, which influenced my model of dynamic attention and social contagion.

Thanks, Adam. You can read Adam’s trilogy of blog posts on the Anxiety Algorithms here: The Anxiety Algorithm, The Paranoia Parameter, and The Contagion of Concern.

Start the discussion at the Epsilon Theory Forum